GNB has moved to weekly covid updates and promises to roll out further changes and reductions in communication in the coming weeks.

Less frequent data is less accurate data.

For those who have been told to perform their own risk assessments that's a problem. Let's see why…

Reducing frequency of data updates introduces three primary problems into the data set:

data is outdated

data is missing

data is misleading

We'll take a look at each of these and how they affect risk assessment using case count and GNB's pet metric, hospitalizations.

OUTDATED

With the move from daily to weekly updates our risk information is between three and nine days old. We have seen how variable data can be in a nine day period; at one point in the pandemic, New Brunswick hospitalizations changed by 60 in nine days.

The further we get from Tuesday updates, the less reliable data becomes.

MISSING

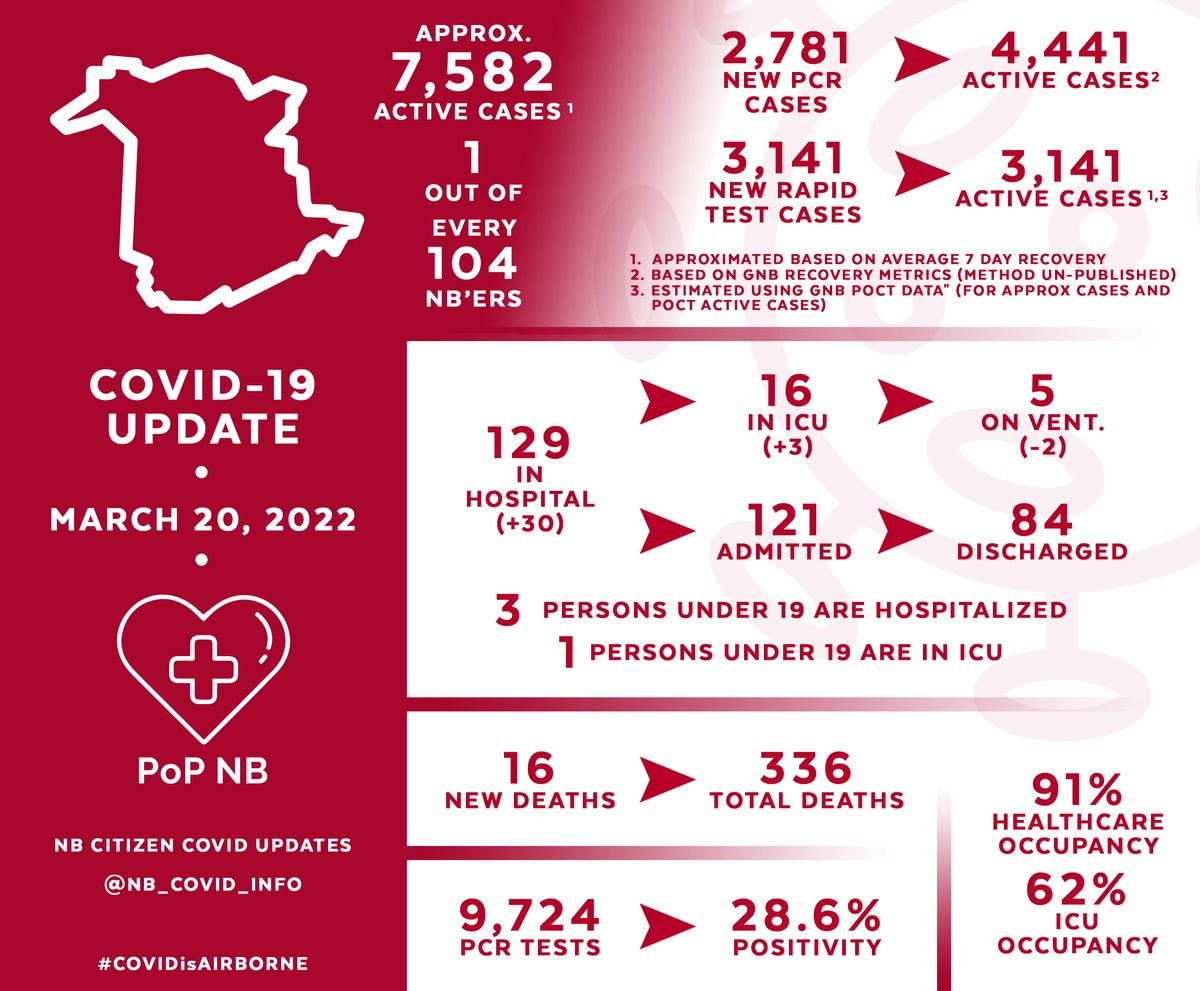

Even more concerning is the absence of data, for multiple reasons. If we look at our most recent update summary, we see the following:

Three of the stats, in hospital, in icu, and on ventilator are static data points meaning they tell us how many people are in these situations at the time of the update.

What is missing is what happened between this update and the prior update. Why do we need to know this?

We can calculate how many individuals were admitted and discharged but we do not know their ages or vaccination status. How many were kids?

Most importantly we do not know the "shape" of the data.

For example, we know hospitalizations increased by 30. This is concerning on its own.

The shape of the intermittent data may increase or decrease this concern. Let's look at two paths hospitalizations may have taken between March 13 and March 20.

Case 1 (top) shows an increase, then decrease.

Case 2 (bottom) shows a decrease, then increase.

What stories do each tell?

Case 1 suggests while hospitalizations initially increased, they have started to trend downward.

Case 2 suggests while hospitalizations initially decreased, more recently they have started climbing back up.

Ask yourself which of these situations you would prefer to be in.

Although case counts are cumulative, and therefore we (technically) aren't missing data, the same lack of data shape exists.

Are cases increasing slowly or quickly? Are cases/day increasing or decreasing? We don't know, and this leads to the final problem.

MISLEADING

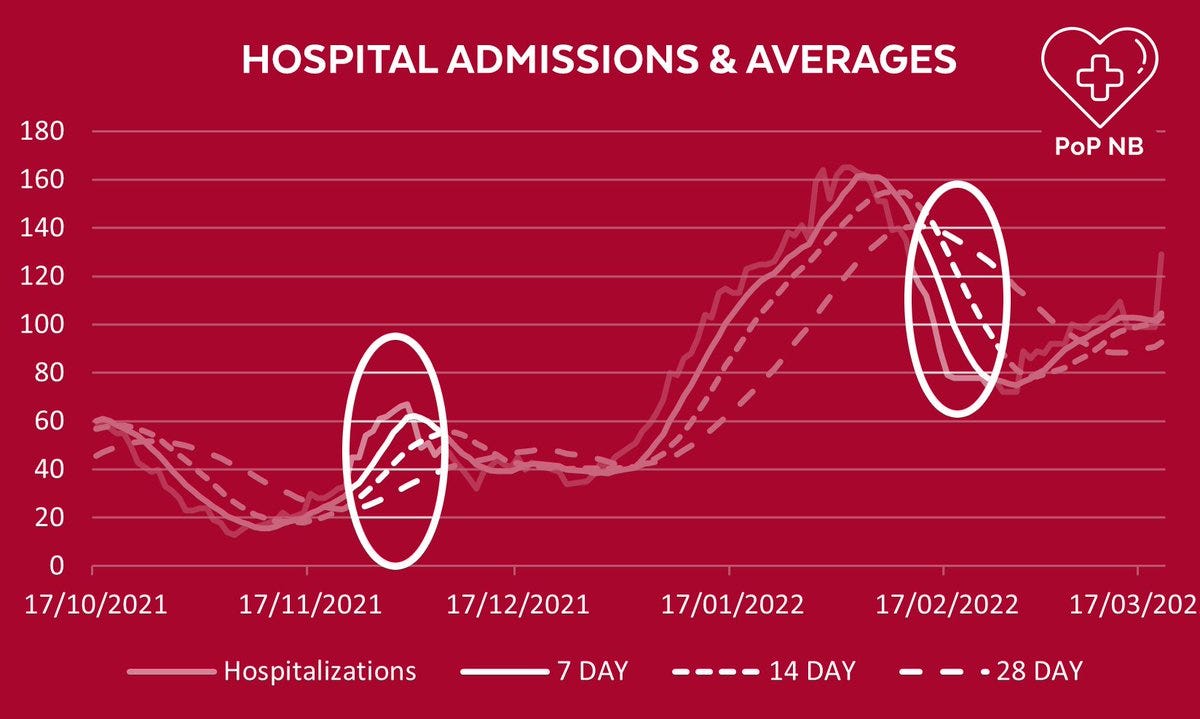

While absent data can lead to lack of context and therefore poor interpretation, trying to interpret intermittent data can force us into methods which are even more misleading. This is best explained with average hospitalizations/day.

GNB has often justified its decisions using 7 day average hospitalizations as a metric. If hospitalizations are only provided every 7 days, this becomes a problem.

Those of us trying to make sense of the data are forced to move to 14 or 28 day averages.

The longer period of time used in calculating a rolling average, the less fine detail is obtainable.

Below are actual NB hospitalizations compared to the 7, 14 and 28 day averages. One can immediately see how longer averaging distorts the data.

Lets look at two areas in particular as examples:

The point on the left shows averages as much as 50% lower than the actual hospitalization number on the day in question. The point on the right shows averages as much as 175% higher than the actual.

You may also notice that averaged lines all peak later and lower than the actual coarse data line.

This side effect of smoothing through averages can be used to great advantage when there is an interest in portraying lower risk.

The less data we have and the longer the period is between updates the less we are able to accurately understand our situation.

GNB has directed people to do their own risk assessments with data that is becoming less reliable and less frequent.

This is unacceptable.